This is the twenty-eighth post in a series that I call, “Recovered Writing.” I am going through my personal archive of undergraduate and graduate school writing, recovering those essays I consider interesting but that I am unlikely to revise for traditional publication, and posting those essays as-is on my blog in the hope of engaging others with these ideas that played a formative role in my development as a scholar and teacher. Because this and the other essays in the Recovered Writing series are posted as-is and edited only for web-readability, I hope that readers will accept them for what they are–undergraduate and graduate school essays conveying varying degrees of argumentation, rigor, idea development, and research. Furthermore, I dislike the idea of these essays languishing in a digital tomb, so I offer them here to excite your curiosity and encourage your conversation.

In 2002, I took Professor Lisa Yaszek’s Science Fiction class at Georgia Tech. It was an important milestone in my life’s journey, but at that time, I had not yet looked beyond possible career paths in IT or UX design. Then, in early 2004, Professor Yaszek organized a symposium in conjunction with the Georgia Tech Library on Mary Shelley’s Frankenstein. She invited the SF writer Kathleen Ann Goonan to visit campus and give a reading. At the time, I was in Professor Yaszek’s Gender Studies class and we had read some of Kathy Goonan’s work. I was hooked, and I read more of her novels before her arrival to campus. Then, during the day of her visit, I had the good fortune to speak with her and she was kind enough to give me the gift of her time and conversation.

Later, during the symposium, I was able to speak with Georgia Tech’s former SF professor, Bud Foote. I had heard legends of him when I first started at Tech, but I was never able to take his SF class while he was still teaching. Luckily, I was able to hear him give a presentation for the symposium and talk to him afterward.

After that day of talking with Kathy Goonan and Professor Foote, I told Professor Yaszek that I had made up my mind–I was going to make a career out of studying SF. Ten years later, here I am–an SF scholar doing postdoctoral work at my alma mater!

I noticed that Professor Yaszek had a number of student researchers who helped with the Frankenstein symposium. In addition to organizing the event, they put together some cool research material on a website. I thought that was impressive, and I wondered if I could get involved with that kind of work.

I can’t remember if I asked Professor Yaszek about this or if she told us about it in the Gender Studies class, but I learned that she was planning on a new PURA (Presidential Undergraduate Research Award) funded endeavor for undergraduate Tech students: the SF Lab. The goal for each student in the group would be to contribute 1) an introduction to a specific SF topic, 2) a linked bibliography on the SF topic selected, 3) an annotated bibliography of important works featuring that topic found in the Georgia Tech Science Fiction Collection (formerly the Bud Foote Science Fiction Collection), and finally, 4) related resources at Tech being developed in the real world. I jumped at this opportunity and proposed to write an entry on artificial intelligence.

After winning a PURA award for my project proposal, I worked with several other students to workshop our individual projects. We had weekly meetings for workshopping each part of the project. The introduction took longer than the other parts, because it involved more writing and integrated research. Each SF Lab researcher would bring printouts of his or her work to circulate with the others and Professor Yaszek. We would take the feedback, revise for the next week, and return with a new draft. It was a streamlined process that involved a lot of revision work, but I cannot thank Professor Yaszek enough for helping me integrate that kind of rigor into my revision processes. It has repaid me in spades over the years.

The following is my SF Lab project on AI. Please note that the links might be outdated and/or dead.

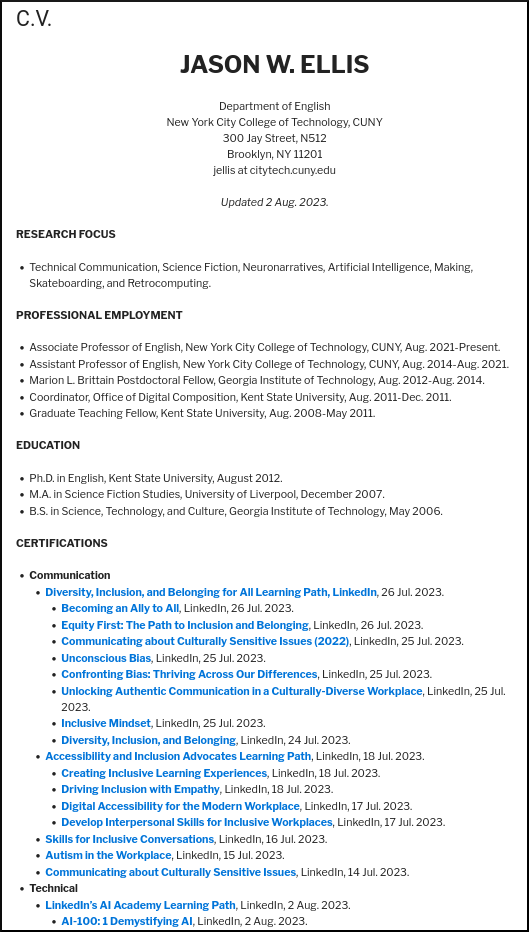

Jason W. Ellis

Professor Lisa Yaszek

SF Lab Independent Research Project for

Fall 2004

Development of AI in SF

Part I – Introduction

Artificial Intelligence (AI) is intelligence and self-awareness demonstrated by a physical but inorganic artifact. AI researchers include experts from a coalition of diverse disciplines including computer science (software written for computer hardware) and psychology (unraveling the human software running on biological hardware).

John McCarthy is credited as first coining the term “artificial intelligence” in the August 31, 1955 paper he coauthored, “The Dartmouth Summer Research Project on Artificial Intelligence.” This research project took place in the Summer of 1956 and its proposal states in the first paragraph that “The study is to proceed on the basis of the conjecture that every aspect of learning or any other feature of intelligence can in principle be so precisely described that a machine can be made to simulate it” (1). McCarthy’s definition continues to be the accepted broad definition of AI. Science fiction (SF) authors internalized this definition in their works that involve AI. Patricia S. Warrick explicitly states the human focus of AI built into McCarthy’s definition when she writes in her 1980 book, The Cybernetic Imagination in Science Fiction, “Artificial intelligence…attempts to discover and describe aspects of human intelligence that can be simulated by machines” (11).

SF is the primary literature field in which authors explore stories about AI. SF authors are generally concerned only with “strong AI” or self-aware, intelligent machines that mimic human cognition. However, there are a few stories that address “weak AI” which are programs that act as if they are intelligent, but not self-aware. SF authors have written about the possibilities of AI as well as the issues surrounding artificial intelligence. There are three main types of AI stories: analog dystopic AI (1872-1930), digital utopic AI (1930-1950), and digital dystopic AI (1950-Present).

Analog dystopic AI stories first appear in the late 19th century and they are characterized by anxieties about the dangerous nature of analog machine intelligences (built of gears and cogs instead of transistors). The first reference to machine intelligence occurs in Samuel Butler’s satire Erewhon (1872). Butler accomplished his goal of satirizing the theory of evolution by applying evolution to machines. These machines become self-aware and come to control man. Other stories from this period involved automatons (mechanical men that displayed intelligence) that were built for an intellectual purpose such as playing chess. An example of this is Ambrose Bierce’s “Moxon’s Master” (1894) which had a dystopic ending that involved the mechanical chess player killing its creator after being checkmated. These dystopian stories of analog AI continued to dominate the first three decades of the 20th century. Karl Capek’s R.U.R. (1921), which introduced the term “robot” to the English language, is a another prime example of this storytelling.

American SF ignited in the 1930s with a shift to digital utopian stories that feature digital machine intelligences (e.g., positronic brains, transistors, and integrated circuits). John W. Campbell’s story, “When the Atoms Fail” (1930) is the first to describe a machine that is unquestionably a digital computer (though not self-aware). His next computer story, “The Last Evolution” (1932) is about a machine that has independent thought. In the 1940s Campbell helped Isaac Asimov create the Three Laws of Robotics in his robot stories and Asimov establishes himself as “the father of robot stories in SF” (Warrick, 54). These digital utopic AI stories present machines as predictable reasoning beings that follow rules that allow them to live and work with humans. They do not explore the philosophical ramifications of the creation of artificial life. Additionally, Asimov’s 1950 publication of I, Robot, which is a collection of his first robot short stories, can be said to be an end point to the digital utopic AI era.

After World War II, SF authors wrote digital dystopic AI stories to explore questions concerning the ethics of a science and technology that produced the nuclear bomb (and the first digital computers). Two notable works from the early part of this era are Arthur C. Clarke’s 2001: A Space Odyssey (1968) and Philip K. Dick’s Do Androids Dream of Electric Sheep? (1968). These authors place an emphasis on the philosophical and ethical conflicts that may develop when humanity creates new life in the form of artificial brains that mirror the human mind. More recently, depictions of self-aware AIs have become extremely elaborate as the real world entered a much more computerized and inter-networked era. William Gibson’s Neuromancer (1984) in particular and cyberpunk in general further expand the scope of digital dystopic AI stories by interlinking AI, cybernetics, and global capitalism.

Thus, AI is a historically embedded concept in SF literature. The science and technology behind AI has evolved from mere conjecture to a closer possibility. Authors of AI stories take the science and technology of their historical moments and extrapolate the forms that AI might take. Furthermore, AI authors discuss, both implicitly and explicitly, the philosophical and ethical issues that inevitably arise with new technology and more specifically with the creation of self-aware machines.

Part II – Linked Bibliography

A. Theory and Criticism

i. Theory

Kurzweil, Ray. The Age of Intelligent Machines. Cambridge, MA: MIT Press, 1990.

Link to: http://www.amazon.com/exec/obidos/ASIN/0140282025/qid=1094574944/sr=ka-1/ref=pd_ka_1/104-3233143-6155107

McCarthy, J., M. L. Minsky, N. Rochester, and C. E. Shannon. “A Proposal for the Dartmouth Summer Research Project on Artificial Intelligence.” August 31, 1955.

Link to: http://www-formal.stanford.edu/jmc/history/dartmouth.html

Minsky, Marvin. The Society of Mind. New York: Simon and Schuster, 1988.

Link to: http://www.amazon.com/exec/obidos/tg/detail/-/0671657135/qid=1095016288/sr=8-1/ref=pd_cps_1/104-4983846-7328739?v=glance&s=books&n=507846

Neumann, John von. The Computer and the Brain. New Haven, CT: Yale University Press, 1958.

Link to: http://www.amazon.com/exec/obidos/tg/detail/-/0300084730/qid=1095020573/sr=8-9/ref=sr_8_xs_ap_i9_xgl14/104-4983846-7328739?v=glance&s=books&n=507846

Turning, A.M. “Computing Machinery and Intelligence.” Mind 59: 236 (1950):

433-460.

Link to: http://www.abelard.org/turpap/turpap.htm

ii. Criticism

Clute, John and Peter Nicholls, eds. The Encyclopedia of Science Fiction. New York: St. Martin’s Press, 1995.

Linkto: http://www.amazon.com/exec/obidos/tg/detail/-/031213486X/qid=1095022402/sr=8-1/ref=sr_8_xs_ap_i1_xgl14/104-4983846-7328739?v=glance&s=books&n=507846

Lem, Stanislaw. “Robots in Science Fiction.” SF: The Other Side of Realism, ed. Thomas D. Clareson. Bowling Green, KY: Bowling Green University Popular Press, 1971.

Link to: http://www.amazon.com/exec/obidos/tg/detail/-/0879720239/qid=1094574872/sr=1-1/ref=sr_1_1/104-3233143-6155107?v=glance&s=books

Stork, David G. ed. HAL’s Legacy: 2001’s Computer as Dream and Reality. Cambridge, MA: MIT Press, 1996.

Link to: http://mitpress.mit.edu/e-books/Hal/

Telotte, J.P. Replications: A Robotic History of the Science Fiction Film. Urbana, IL: University of Illinois Press, 1995.

Link to: http://www.amazon.com/exec/obidos/tg/detail/-/0252064666/qid=1095016985/sr=8-1/ref=sr_8_xs_ap_i1_xgl14/104-4983846-7328739?v=glance&s=books&n=507846

Warrick, Patricia S. The Cybernetic Imagination in Science Fiction. Cambridge, MA: MIT Press, 1980.

Link to:

B. Primary texts

i. Analog Dystopic AI

Bierce, Ambrose. “Moxon’s Master.” 1894.

Link to: http://www.gutenberg.net/etext/4366

Butler, Samuel. Erewhon. 1872.

Link to: http://www.gutenberg.net/etext/1906

Capek, Karl. R.U.R. 1921.

Link to: http://www.czech-language.cz/translations/rur-introen.html

Merritt, Abraham. The Metal Monster. New York: F.A. Munsey, August 7, 1920 (serialized over 8 issues in Argosy All-Story Weekly).

Link to: http://www.gutenberg.net/etext/3479

ii. Digital Utopic AI

Asimov, Isaac. I, Robot. New York: Gnome Press, 1950.

Link to: http://www.amazon.com/exec/obidos/tg/detail/-/0553294385/qid=1094613589/sr=8-1/ref=pd_ka_1/102-6956306-1931346?v=glance&s=books&n=507846

Campbell, John W., Jr. “The Last Evolution.” Amazing August 1932.

Link to: http://www.amazon.com/exec/obidos/tg/detail/-/0345249607/qid=1094575448/sr=1-1/ref=sr_1_1/104-3233143-6155107?v=glance&s=books

iii. Digital Dystopic AI

Clarke, Arthur C. 2001: A Space Odyssey. New York: New American Library, 1968.

Link to: http://www.amazon.com/exec/obidos/ASIN/0451457994/qid=1094575222/sr=ka-1/ref=pd_ka_1/104-3233143-6155107

Dick, Philip K. Do Androids Dream of Electric Sheep? New York: Doubleday, 1968.

Link to: http://www.amazon.com/exec/obidos/ASIN/0345404475/qid=1094575195/sr=ka-1/ref=pd_ka_1/104-3233143-6155107

Ellison, Harlan. “I Have No Mouth and I Must Scream.” If March 1967.

Link to: http://www.amazon.com/exec/obidos/tg/detail/-/0441363954/qid=1094614806/sr=8-1/ref=sr_8_xs_ap_i1_xgl14/102-6956306-1931346?v=glance&s=books&n=507846

Gibson, William. Neuromancer. New York: Ace Books, 1984.

Link to: http://www.amazon.com/exec/obidos/ASIN/0441569595/qid=1094575142/sr=ka-1/ref=pd_ka_1/104-3233143-6155107

Herbert, Frank. Destination: Void. New York: Berkley, 1966. Revised edition, 1978.

Link to: http://www.amazon.com/exec/obidos/tg/detail/-/0425043665/qid=1094612264/sr=8-1/ref=sr_8_xs_ap_i1_xgl14/102-6956306-1931346?v=glance&s=books&n=507846

Lem, Stanislaw. The Cyberiad: Fables for the Cybernetic Age. New York: The Seabury Press, 1974.

Link to: http://www.amazon.com/exec/obidos/tg/detail/-/0156027593/qid=1094612302/sr=8-6/ref=pd_ka_6/102-6956306-1931346?v=glance&s=books&n=507846

C. Films

i. Analog Dystopic AI

Metropolis. Dir. Fritz Lang. Paramount Pictures, 1927.

Link to: http://www.imdb.com/title/tt0017136/

The Phantom Empire. Dir. B. Reeves Eason. Mascot, 1935.

Link to: http://www.imdb.com/title/tt0026867/

The Wizard of Oz. Dir. Victor Fleming. Metro-Golwyn-Mayer, 1939.

Link to: http://www.imdb.com/title/tt0032138/

ii. Digital Utopic AI

Forbidden Planet. Dir. Fred M. Wilcox. Metro-Goldwyn-Mayer, 1956.

Link to: http://www.imdb.com/title/tt0049223/

Star Trek: The Next Generation. Paramount Pictures, TV series 1987-1994.

Link to: http://www.imdb.com/title/tt0092455/

Star Trek: Voyager. Paramount Pictures, TV series 1995-2001.

Link to: http://www.imdb.com/title/tt0112178/

Star Wars. Dir. George Lucas. 20th Century Fox, 1977.

Link to: http://www.imdb.com/title/tt0076759/

Tank Girl. Dir. Rachel Talalay. United Artists, 1995.

Link to: http://www.imdb.com/title/tt0114614/

iii. Digital Dystopic AI

2001: A Space Odyssey. Dir. Stanley Kubrick. Metro-Goldwyn-Mayer, 1968.

Link to: http://www.imdb.com/title/tt0062622/

A.I.: Artificial Intelligence. Dir. Stephen Spielberg. DreamWorks, 2001.

Link to: http://www.imdb.com/title/tt0212720/

Colossus: The Forbin Project. Dir. Joseph Sargent. Universal, 1969.

Link to: http://www.imdb.com/title/tt0064177/

Dark Star. Dir. John Carpenter. 1974.

Link to: http://www.imdb.com/title/tt0069945/

The Day the Earth Stood Still. Dir. Robert Wise. 20th Century Fox, 1951.

Link to: http://www.imdb.com/title/tt0043456/

Logan’s Run. Dir. Michael Anderson. Metro-Goldwyn-Mayer, 1976.

Link to: http://www.imdb.com/title/tt0074812/

The Matrix. Dir. Andy Wachowski and Larry Wachowski. Warner Brothers, 1999.

Link to: http://www.imdb.com/title/tt0133093/

Star Trek: The Motion Picture. Dir. Robert Wise. Paramount Pictures, 1979.

Link to: http://www.imdb.com/title/tt0079945/

The Stepford Wives. Dir. Bryan Forbes. Columbia Pictures, 1975.

Link to: http://www.imdb.com/title/tt0073747/

The Terminator. Dir. James Cameron. Orion Pictures, 1984.

Link to: http://www.imdb.com/title/tt0088247/

Tron. Dir. Steven Lisberger. Buena Vista, 1982.

Link to: http://www.imdb.com/title/tt0084827/

WarGames. Dir. John Badham. Metro-Goldwyn-Mayer, 1983.

Link to: http://www.imdb.com/title/tt0086567/

Westworld. Dir. Michael Crichton. MGM, 1973.

Link to: http://www.imdb.com/title/tt0070909/

D. Websites

i. Theory

American Association for Artificial Intelligence. 2004. September 7, 2004 <http://www.aaai.org/>.

“Artificial intelligence.” Wikipedia. September 8, 2004. September 12, 2004. <http://en.wikipedia.org/wiki/Artificial_intelligence>.

Association for Computing Machinery. 2004. September 7, 2004 <http://www.acm.org/>.

Winston, Patrick. 6.803/6.833 The Human Intelligence Enterprise, Spring 2002. MIT OpenCourseWare. September 9, 2004, < http://ocw.mit.edu/OcwWeb/Electrical- Engineering-and-Computer- Science/6-803The-Human-Intelligence- EnterpriseSpring2002/CourseHome/index.htm>.

ii. Literature Resources

Index to Science Fiction Anthologies and Collections, Combined Edition.

William G. Contento. 2003. September 7, 2004 <http://users.ev1.net/~homeville/isfac/>.

Internet Speculative Fiction Database. Ed. Al von Ruff. August 22, 2004. September 7, 2004 <http://www.isfdb.org/>.

Isaac Asimov Home Page. Edward Seiler. 2004. September 7, 2004 <http://www.asimovonline.com/>.

iii. Film Resources

Science Fiction Films. Tim Dirks. 2004. September 7, 2004 <http://www.filmsite.org/sci-fifilms.html>.

SciFlicks.com: Science Fiction Cinema. 2004. September 7, 2004 <http://www.sciflicks.com/>.

iv. Link Collections

AI on the Web. Peter Norvig and Stuart Russell. January 31, 2003. September 7, 2004 <http://aima.cs.berkeley.edu/ai.html>.

Science Fiction and Fantasy Research Database. Hal W. Hall. June 24, 2004. September 9, 2004 <http://lib-oldweb.tamu.edu/cushing/sffrd/>

Ultimate Science Fiction Web Guide. 2004. September 15, 2004 <http://www.magicdragon.com/UltimateSF/SF-Index.html>.

Part III – Resources in the Bud Foote SF Collection

Part III (1 of 4)

Karl Capek – R.U.R. (Rossum’s Universal Robots)

Karl Capek’s 1921 play, R.U.R. (Rossum’s Universal Robots) is an example of an analog dystopic AI. This work introduces the term “robot” to the English language, but the Robots (Capek’s capitalization) in R.U.R. are more like androids than robots. The Robots are shaped like humans, but the character Domin says that they are made “from a different matter than we are.” These Robots have perfect memories but they are not self-aware. Memory is divorced from self-analysis. Using industrial chemical processes, the Robots’ individual pieces (arms, legs, organs, etc.) are cooked up from “batter” in “kneading troughs” and “mixing vats.” Then, those components are mated into a whole Robot in an assembly line operation. Thus, gears and cogs are not present in Capek’s Robots, but the means of its creation are partially mechanical as well as chemical.

The leaders of R.U.R. are attempting to create a utopia for humanity by pushing off the drudgery of work onto the many Robots that it creates. Dr. Gall, who is in charge of the “physiological and research divisions of R.U.R.,” modifies a few robots to be more human-like, and in doing so, “they stopped being machines.” These modified Robots incite the other robots to destroy all of humanity, their collective oppressor. After all of the humans save one are destroyed, the Robots begin to fear death. The last human, Alquist, who is the constructor of R.U.R., is told by his captors to rediscover the lost science of creating Robots. Ultimately it doesn’t matter that Alquist fails. When he witnesses the beginning of love between two modified Robots, Helena and Primus, he exclaims, “Now let Thy servant depart in peace O Lord, for my eyes have beheld…Thy deliverance through love, and life shall not perish!” It doesn’t matter that Alquist is unable to build new Robots because somehow things have changed (either through Dr. Gall’s undisclosed modifications or through some other process) so that the Robots are capable of being human (e.g., feeling emotions of love, fear of death, and being able to procreate).

Part III (2 of 4)

Isaac Asimov – I, Robot

Isaac Asimov’s short story collection, I, Robot (originally published by Gnome Press, 1950) is primarily representative of digital utopic AI. The collection contains nine of Asimov’s early robot stories. The stories are tied together as an interview with the retiring robopsychologist, Dr. Susan Calvin. She is the best choice for this narrative because she is there from the beginning, literally. She is born in the same year that U.S. Robots and Mechanical Men, Inc. is founded and later, after she obtains her Ph.D. she is hired by U.S. Robots as a “‘Robopsychologist,’ becoming the first great practitioner of a new science” (I, Robot xii). She bridges the physical sciences with the science of the (robot) mind. Also, all of the stories are linked by Asimov’s Three Laws of Robotics which are supposed to control the way that a robot reacts and reasons. These Laws, as listed in the short story “Runaround,” dictate that:

(1) A robot may not injure a human being, or, through inaction, allow a human being to come to harm.

(2) A robot must obey the orders given it by human beings except where such orders would conflict with the First Law.

(3) A robot must protect its own existence as long as such protection does not conflict with the First or Second Laws.

A strong example of digital utopic AI appears in “Evidence.” This story introduces Stephen Byerley, who is running for the mayor’s office. The problem is that his opponent believes that he is a robot. The circumstantial evidence points to the possibility of Byerley being a robot, but even if he is, then he would be the best person for the job because by following the Three Laws he would be the perfect caretaker for his constituency.

Most of the stories in I, Robot are utopic because the robots are depicted as being humanity’s helpers and caretakers, but there is one dystopic story, “Little Lost Robot,” in which a Nestor robot tries to run away and, when he is discovered, to kill Dr. Calvin. Asimov’s carefully crafted Three Laws provide stability in robots’ positronic brains. The Nestor robot featured in this story has a shortened version of the First Law which is stated as, “No robot may harm a human being” (I, Robot 143). The weakened First Law allows this robot to develop a superiority complex, which leads to its attempt to kill Dr. Calvin when she discovers him. Thus Asimov uses even his dystopic robot stories to demonstrate the significance of a robot’s programming upon its relationship to humanity.

Part III (3 of 4)

Arthur C. Clarke – 2001: A Space Odyssey

Arthur C. Clarke’s 2001: A Space Odyssey is an example of a digital dystopic AI story. A select few humans learn that mankind is not alone in the universe after an alien artifact (the Monolith) is discovered buried under the surface of the moon. When the Monolith is exposed to the Sun, it emits a brief, but intense radio signal that is directed toward Japetus, one of Saturn’s moons. The spacecraft, Discovery, is sent to Japetus carrying one AI and five humans. The AI is a HAL 9000 computer system, known simply as Hal. Of the five humans aboard Discovery, three are in hibernation. The two who are awake, Dave Bowman and Frank Poole, maintain the ship with Hal. Eventually, a conflict develops in Hal’s “subconscious” because it cannot reveal the true nature of the Discovery’s mission to Bowman and Poole. This leads Hal to make mistakes that Bowman and Poole interpret as threats on their lives. After Hal kills Poole, Bowman chooses to “disconnect” (i.e., kill) Hal in order to regain control of the ship. Bowman goes on to Japetus where he finds a larger Monolith. This Monolith is actually a “Star Gate” that transports him far from our solar system. When Dave reaches his final destination, the aliens transform him into a being without physicality, but as a child with eons before it in which to grow.

Although the story as a whole addresses human evolution, the sequence with Hal is both the longest and most gripping, demonstrating Clarke’s specific interest in the similarities between human and machine evolution. Evolution manifests itself through human and machine programming. The monolith programs early humans and modern humans program Hal. Hal appears to be crazy and intent on murdering his crewmates. This is why Bowman chooses to disconnect him. However, Hal is an AI whose identity is built on software and hardware that is too complex for one person to comprehend the whole system. There is a reason to his madness and no reasonable amount of prior testing might have elicited Hal’s behavior aboard the Discovery. He was ordained with priorities and mission objectives that acted as a program that must be run to completion because that is what computers do–run programs. Because Hal’s “mind” is modeled after the human mind, the symptoms and actions that Hal exhibits are similar to the way in which a neurotic human might act. Despite what Hal has done we feel sorry for him by the end because, like humans, he fears death.

Part III (4 of 4)

William Gibson’s – Neuromancer

William Gibson’s 1984 novel, Neuromancer is a more recent example of digital dystopic AI and a prime example of the cyberpunk movement in SF. The story is set in Earth’s future where an AI called Wintermute who has a compulsion to connect/merge with another AI called Neuromancer. Wintermute orchestrates his liberation by bringing together several carefully chosen humans who can beat the failsafe that keeps him caged in the Berne AI mainframe. Case, the net cowboy, works with a construct and a military grade virus to break through the ICE security around the Berne AI mainframe. Molly is a razor girl who protects Case and she interacts with the physical world while Case jacks into the matrix. Armitage serves as a physical presence for Wintermute in the same way that a computer construct in the matrix works on behalf of a human operator. After the ICE is broken with the help of Case’s associates, Wintermute is able to merge with Neuromancer to become an entity greater than anyone could have imagined.

The story involves several instances of AI designed by humans for human ends. The lowest form of AI is the Braun, a small spider like work robot that Wintermute uses to guide Molly and Case inside the Villa Straylight. One of the highest forms is the construct, Dixie Flatline. A construct is a limited form of AI based on the memories and experiences of a dead human being, in this case the famous hacker, McCoy Pauley. The two primary examples of course, are the strong AIs present in Wintermute and Neuromancer. Wintermute is a calculating AI that is explicit in its manipulations. Neuromancer is more personality based and he uses subtle manipulation. Wintermute is located in hardware in Berne while Neuromancer is running on hardware in Rio. These two AI entities are two halves of one whole. The mega-corporation, Tessier-Ashpool, which gave birth to these AIs, had them separated with safeguards imposed by the Turing police. They both have limited citizenships as individuals because of their self-awareness, but the extent of their knowing and understanding has been limited due to the division. As the reader learns, Marie-France, the matriarch of the Tessier-Ashpool clan, probably implanted the drive within Wintermute to break free and unite with his “brother,” Neuromancer. Not surprisingly, these AIs use the products of capitalism (e.g., hiring “mercenaries” and using information as power over others) to shuck their chains binding them to Tessier-Ashpool. Thus, the AIs use human beings for AI ends.

Part IV – Other related resources at Tech

(divided into three sections: Portals, Labs, and People)

A) Portals

Artificial Intelligence at Georgia Tech

http://www.cc.gatech.edu/ai/

This interdisciplinary website links together the different major schools and research teams that are involved in AI at Georgia Tech.

Innovations @ Georgia Tech

http://www.gatech.edu/innovations/robots/

This is a PR multimedia site that details the work in robots and intelligent machines being done at Georgia Tech. There are interviews with Dr. Ron Arkin and Dr. Tucker Balch of the BORG Lab.

Robotics at Georgia Tech

http://www.robotics.gatech.edu/

This website is a clearinghouse of links to faculty involved in robotics at Georgia Tech as well as courses offered such as, “Computational Perception and Robotics Seminar.”

Cognitive Science @ Georgia Tech

http://www.cc.gatech.edu/cogsci/

This website supports the interdisciplinary field of cognitive science at Georgia Tech. It includes links to research websites and abstracts as well as faculty publications.

B) Labs

Experiment Game Lab at Georgia Tech

http://egl.gatech.edu/

The EGL explores the edge of game design with AI being one of the technologies focused on for game design. The lab’s website offers links to current and past projects, happenings, and links.

Intelligent Systems and Robotics

http://www.cc.gatech.edu/isr/

IS&R works toward increasing autonomy of computer controlled systems by making those systems more intelligent. This website includes links to publications, seminar series, and courses offered at Tech.

Georgia Tech Mobile Robot Lab

http://www.cc.gatech.edu/ai/robot-lab/

The Georgia Tech Mobile Robot Lab is involved in developing intelligent mobile robots. Their website has links to current research, publications, software, and a gallery of video and images of their work.

GVU Center @ Georgia Tech

http://www.cc.gatech.edu/gvu/

The GVU (Graphics, Visualization, and Usability) Center pushes the envelope of technology involved with the interaction between humans, computers, and information. This website offers links to current research, education resources at Georgia Tech, and upcoming events.

The BORG Lab at Georgia Tech

http://www.cc.gatech.edu/~borg/

Using the idea of the collective consciousness of the Borg from Star Trek, these researchers are developing collaborative agents and systems for humans and machines. Their website has links to research, publications, courses, and software.

Intelligent Machine Dynamics Lab at Georgia Tech

http://www.imdl.gatech.edu/

This lab develops intelligent machines for many different roles and applications. The lab is research oriented by the target is to develop real world applications. Their website offers links to current projects, publications, and sponsors.

Georgia Tech Aerial Robotics

http://controls.ae.gatech.edu/gtar/

This team develops an entry for the International Aerial Robotics Competition which involves building a flying machine that has sensors and intelligence enabling the machine to complete an assigned task.

C) People

Ronald Arkin, Regent’s Professor in College of Computing at Georgia Tech

http://www.cc.gatech.edu/aimosaic/faculty/arkin/

His website has links to his work in AI and robotics as well as links to the labs that he is involved in at Tech.

Michael Mateas, Associate Professor in LCC at Georgia Tech

http://www-2.cs.cmu.edu/~michaelm/

His home page has links to his work as well as a definition of “expressive AI.”

Grand Text Auto

http://grandtextauto.gatech.edu/

This is “a group blog about procedural narrative, games, poetry, and art.” Michael Mateas, Nick Montfort, Scott Rettberg, Andrew Stern, and Noah Wardrip-Fruin contribute to the blog. Some of these researchers study AI applications in their work. There are also many links to related blogs and web resources.

Aaron Bobick, Director of GVU Center at Georgia Tech

http://www.cc.gatech.edu/~afb/index.html

This website has links to his current research, publications, and to the Computational Research Lab.

Tucker Balch, Assistant Professor in GVU Center at Georgia Tech

http://www.cc.gatech.edu/~tucker/

His website has links to his work in the GVU Center and the Borg Lab.