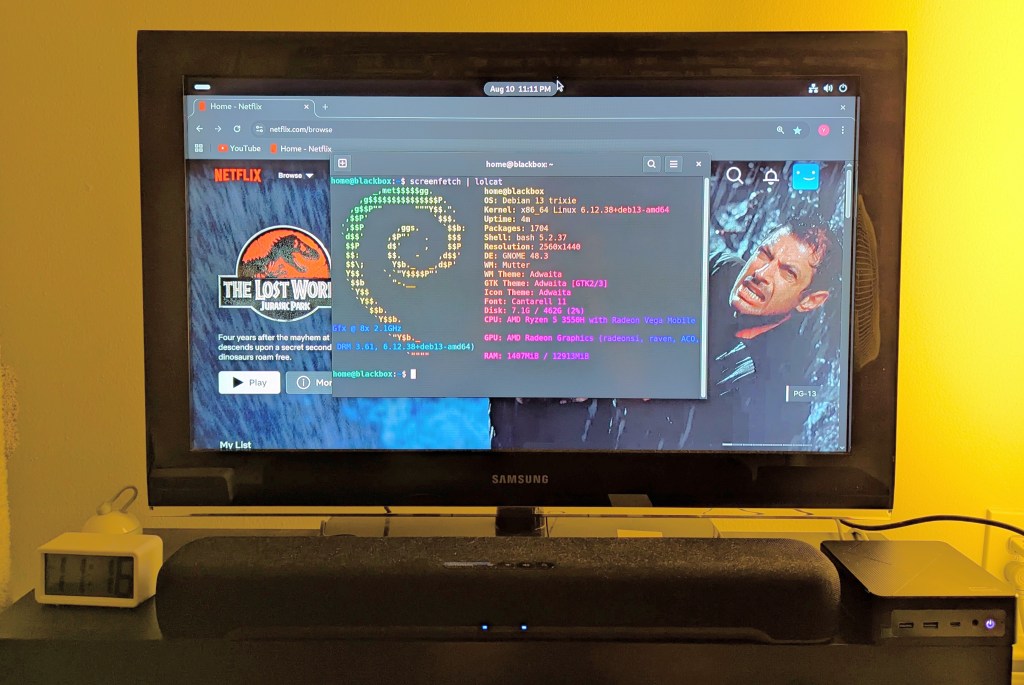

Last week, I upgraded the Debian 12 Bookworm installation on my workstation to Debian 13 Trixie. After updating my sources file and running apt update and apt full-upgrade, the installation went painlessly and quickly. However, I noticed some glitches with video playback (audio stutter) and listing directory contents on a hard disk drive (prolonged delays even though the drive was in active use and not waking from sleep). I wasn’t that interested in spending time to track down the source of these particular problems, so I decided to do a nuke-and-pave full reinstall of Debian 13.

That led to three days of frustration and reinstalling Debian 13 five times. I now have a stable installation that does what I want, but it took a lot of bashing my forehead into the desk to get here.

To be fair, some of the problems were created by me trying to configure software that I don’t have a deep understanding of using shared knowledge online that might be years old and applicable to older versions of those programs.

The one problem that caused the most headaches wasn’t my fault as far as I can suss out. The installer seemed to alternate assigning the “zero” nvme drive between my two identical 2TB nvme drives. This strange behavior led to me wiping both drives and installing Debian 13 on both during the multiple installations. When it comes to partitioning, I’m super cautious, because even though my data was backed up, I still didn’t want to wipe a drive unless it was what I wanted to do.

Besides the Debian 13 installer’s partitioning software, I wondered if it could have something to do with my motherboard’s bios. I am a few versions behind the latest release, but none of the bios’ change logs mention anything to do with code for handling the nvme drives.

I didn’t document my experience like I should, because I got to a point where I just wanted a stable system so that I could get some work done. I accomplished that at least. Though, I wanted to put this up as a potential warning in case anyone else experiences something similar.